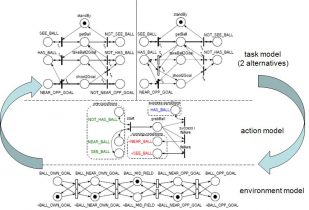

For single agents, such planning problems are naturally framed in the partially observable Markov decision process (POMDP) paradigm. In a POMDP, uncertainty in acting and sensing is captured in probabilistic models, and allows an agent to plan on its belief state, which summarizes all the information the agent has received regarding its environment. For the multiagent case, we frame our planning problem in the decentralized POMDP (Dec-POMDP) framework.

In the Intelligent Systems Lab, one of the research foci is planning under uncertainty. That is, we compute plans for single agents as well as cooperative multiagent systems, in domains in which an agent is uncertain about the exact consequences of its actions. Furthermore, it is equipped with imperfect sensors, resulting in noisy sensor readings which provide only limited information.

Software

- Markov Decision Making package for ROS, by João Messias

- MADP, Multiagent decision process toolbox by Matthijs Spaan and Frans Oliehoek

- Dec-POMDP problem domains, by Matthijs Spaan

- POMDP Solvers, repository created by Tiago Veiga – check the folder

‘SymbolicPerseusIR’ for Tiago’s POMDP-IR code, that endows POMDPs with special actions which enable rewarding beliefs instead of states, without modifying the traditional solution of PODMPs (value function remains PWLC).

- OpenMarkov – output .pgmx files are recognized by Tiago’s POMDP-IR code and MADP